March 4, 2025

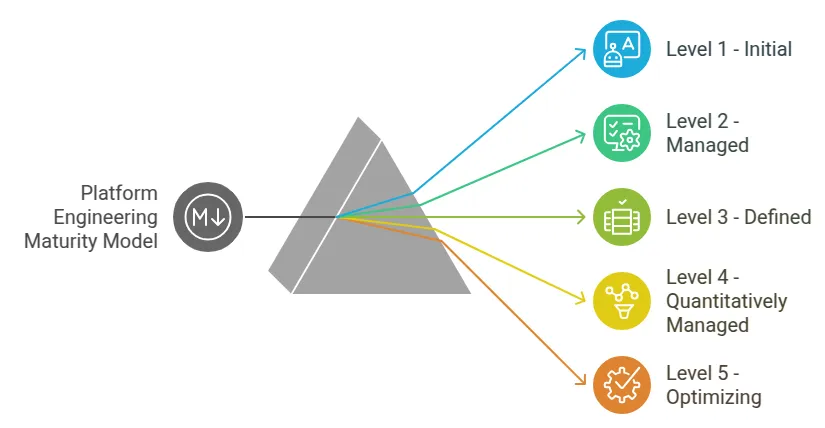

The Platform Engineering Maturity Model: Assessing Organizational Position

By Priyank Dhami

Improwised Technologies Pvt. Ltd.

Navigating Cloud-Native Transformation with the CNCF Maturity Model

The CNCF Cloud Native Maturity Model provides a structured approach to assessing and advancing cloud-native adoption across five key areas: cultural change, process evolution, technology implementation, observability, and resilience. It defines five maturity levels—Learn, Crawl, Walk, Run, and Fly—guiding organizations from initial exploration to full-scale cloud-native operations. The model emphasizes iterative improvements, enabling teams to progressively enhance automation, security, scalability, and reliability. It also highlights the importance of DevOps practices, continuous delivery, declarative infrastructure, and GitOps. By following the maturity model, organizations can systematically adopt cloud-native principles, optimize workflows, and achieve higher efficiency in software delivery and operations.

Level 1: Ad-Hoc and Manual Execution

At this stage, platform engineering practices are inconsistent or nonexistent. Development and operations teams operate in silos, with limited collaboration. Infrastructure provisioning, application deployments, and environment configurations are executed manually, often relying on individual expertise rather than documented processes.

Technical workflows lack standardization, leading to environment drift and configuration inconsistencies. Monitoring and logging solutions, if present, are fragmented, making incident resolution reactive and time-intensive. Version control for infrastructure code is not enforced, resulting in undocumented changes and elevated risk of outages. Organizations at this level typically face prolonged deployment cycles, frequent production failures, and high operational overhead due to repetitive manual tasks.

Level 2: Standardized Foundations

Organizations at this level establish basic consistency in toolchains and processes. Cross-functional teams adopt a common set of tools for source control, such as Git, and implement rudimentary automation scripts for provisioning cloud resources or deploying applications. Infrastructure configurations begin to transition from manual setups to declarative templates, though these may not yet be fully version-controlled or tested.

Centralized logging and monitoring systems, such as Elasticsearch or Prometheus, are deployed to aggregate metrics and logs. However, alerting mechanisms remain underdeveloped, and incident response processes are not systematically enforced. Security practices, such as network policies or access controls, are applied inconsistently across environments. While deployment frequency improves, releases remain prone to delays due to incomplete automation and reliance on human intervention for approvals or error handling.

Level 3: Automated Delivery Pipelines

At this stage, organizations implement continuous integration and delivery (CI/CD) pipelines to automate code builds, testing, and deployments. Infrastructure as Code (IaC) tools like Terraform or AWS CloudFormation are standardized, enabling version-controlled, repeatable environment provisioning. Automated testing frameworks validate infrastructure changes and application functionality before deployment, reducing regression risks.

Monitoring systems evolve to include real-time dashboards and anomaly detection, with tools like Grafana or Datadog providing visibility into system performance. Incident management processes integrate with ticketing systems, and post-mortem analyses become routine. Security practices shift left, with static code analysis and vulnerability scanning embedded into pipelines. Despite these advancements, platform capabilities remain fragmented across teams, with limited self-service options for developers.

Level 4: Self-Service Platforms

Mature organizations operationalize internal developer platforms (IDPs) that abstract underlying infrastructure complexities. These platforms expose APIs, CLI tools, or web interfaces for developers to provision resources, deploy services, and manage lifecycle operations autonomously. Multi-tenancy and role-based access control (RBAC) ensure compliance with security and governance policies.

Platform teams curate standardized service catalogs offering pre-approved configurations for databases, message queues, or Kubernetes clusters. Policy as Code frameworks, such as Open Policy Agent, enforce organizational rules for resource quotas, cost management, and compliance. Observability stacks correlate metrics, logs, and traces across microservices, enabling proactive performance optimization. Deployment frequencies accelerate, and mean time to recovery (MTTR) declines significantly due to automated rollback mechanisms and canary deployments.

Level 5: Continuous Evolution

The highest maturity level is characterized by data-driven optimization and iterative improvement of platform capabilities. Machine learning models analyze telemetry data to predict capacity requirements, detect anomalies, or recommend auto-scaling policies. Chaos engineering practices systematically test system resilience, while automated remediation scripts address common failures without human intervention.

Platforms integrate with organizational governance frameworks to dynamically enforce compliance mandates, such as GDPR or HIPAA, across all workloads. Feedback loops between developers, platform engineers, and security teams ensure continuous refinement of tools and processes. Organizations achieve near-zero downtime deployments, with infrastructure costs optimized through real-time usage analytics.

Assessing Organizational Maturity

To evaluate maturity, organizations must audit existing processes against objective criteria:

-

Automation Coverage: Percentage of infrastructure provisioning, testing, and deployment tasks executed without manual intervention.

-

Environment Consistency: Degree of parity between development, staging, and production environments, measured by configuration drift analysis.

-

Incident Response Efficiency: Metrics such as MTTR, frequency of critical outages, and percentage of incidents resolved via automated remediation.

-

Developer Autonomy: Time required for developers to provision resources or deploy code without platform team assistance.

-

Compliance Adherence: Frequency of audit failures or security breaches attributed to misconfigurations.

Quantitative benchmarks, such as DORA (DevOps Research and Assessment) metrics, provide industry standards for deployment frequency, lead time, and failure rates. Platform teams should also conduct qualitative assessments through developer surveys to identify friction points in toolchains or documentation.

Consequences of Maturity Stagnation

Organizations that fail to advance through maturity levels face measurable operational and strategic risks:

-

Operational Inefficiency: Manual processes consume engineering bandwidth, diverting resources from innovation to maintenance. High toil reduces team capacity to address technical debt or scalability demands.

-

Security Vulnerabilities: Inconsistent enforcement of security policies increases exposure to breaches. Manual configurations are prone to human error, leading to misconfigured cloud storage, open network ports, or outdated dependencies.

-

Compliance Failures: Lack of automated governance mechanisms results in noncompliance with regulatory requirements, incurring legal penalties or reputational damage.

-

Developer Attrition: Fragmented tools and delayed deployments frustrate engineering teams, reducing productivity and increasing turnover rates.

-

Scalability Limitations: Inability to automate resource provisioning inhibits growth, forcing organizations to overprovision infrastructure or delay feature releases.

| Domain | Level 0 | Level 1 | Level 2 | Level 3 | Level 4 |

|---|---|---|---|---|---|

| Automation | Manual | Scripted | Self-Service | GitOps | Predictive |

| Governance | None | Audits | Static Checks | Dynamic Policy | ML-Driven |

| Observability | Log Files | Metrics | Centralized | Cross-Service | Prescriptive |

Interconnected Aspects of Maturity

Each aspect of the model should be evaluated independently, but they are interrelated. Improving one aspect may require reaching a certain level of maturity in another. For example, advancing in automation may depend on having a foundational level of standardization and governance.

Implementing the Model

Implementing the Platform Engineering Maturity Model requires intentional planning and discipline. It involves recognizing the current state of your organization’s cloud native transformation and using resources like the Cloud Native Maturity Model for evaluation.

Conclusion

The Platform Engineering Maturity Model offers a structured approach to diagnose an organizations ability to deliver scalable, secure, and efficient platforms. Progression through maturity levels correlates directly with reduced operational risk, accelerated delivery cycles, and improved resource utilization. Organizations stagnating at lower levels incur technical debt, security liabilities, and competitive disadvantages. Conversely, those investing in automation, self-service platforms, and data-driven optimization position themselves to adapt to evolving architectural demands and market conditions. Regular assessment against this model ensures alignment between platform capabilities and organizational objectives, mitigating the consequences of operational inertia.

November 19, 2025

Unlocking Developer Potential: How Platform Engineering Transforms Developer Experience

By Shyam Kapdi

Improwised Technologies

Pvt. Ltd.

By Shyam Kapdi

Improwised Technologies

Pvt. Ltd.

November 14, 2025

How to Build a High-Impact Internal Developer Platform: Step-by-Step Blueprint, Tools, and Best Practices

By Shyam Kapdi

Improwised Technologies

Pvt. Ltd.

Optimize Your Cloud. Cut Costs. Accelerate Performance.

Struggling with slow deployments and rising cloud costs?

Our tailored platform engineering solutions enhance efficiency, boost speed, and reduce expenses.